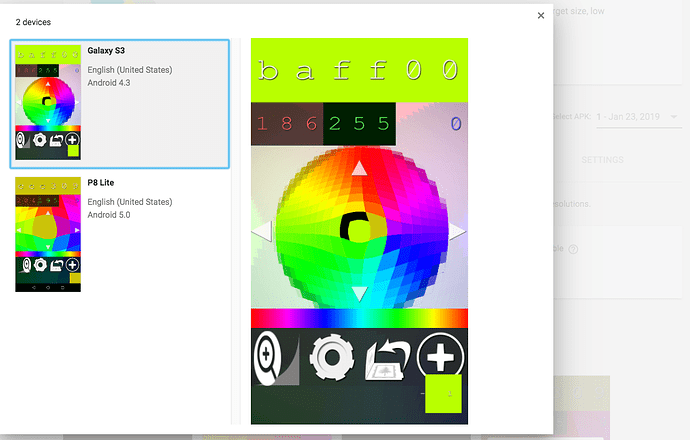

Hello! I am experiencing some trouble and I’ve researched this a lot and didn’t find anything that solves the problem yet. First I will share some proof that the app use to work (this was using SDL 2.0.8) on the Galaxy S3 (firebase screenshot from the pre-launch report follows). I’m using SDL_WINDOW_OPENGL | SDL_WINDOW_SHOWN | SDL_WINDOW_RESIZABLE and gl = SDL_GL_CreateContext(sdlWindow);

The shader isn’t perfect when highp float isn’t available… but it otherwise worked on those devices… I was still wrapping up development, and SDL 2.0.9 was released, so I upgraded… I also upgraded many of the android SDK packages and had to change gradle versions in the process. Now the app doesn’t work right on the S3 at all… I realize its API level 18 device but its still disheartening and I’m struggling to make it work ANYWAY… since it sort of works I feel like there HAS to be a way to make it work.

(cannot post 2nd image yet, but suffice to say the final draw call is apparent and nothing else)

I had tried downgrading to the older revision of build.gradle and SDL 2.0.8 but some element of the android SDK must be too new, and or intentionally broke the older devices… I can’t figure it out, and I don’t have an S3 to play with… but I do have a Fire TV (older one) and I’ve been debugging what works and what doesn’t work on there, since it seems to be the same exact issue (based on screenshots). 2 days into it now, maybe a little more.

I am using most of the tips found in other threads. I’ve tried every combination of:

SDL_GL_SetAttribute(SDL_GL_DEPTH_SIZE, 0);

SDL_GL_SetAttribute(SDL_GL_STENCIL_SIZE, 0);

// SDL_GL_SetAttribute(SDL_GL_RED_SIZE, 5);

// SDL_GL_SetAttribute(SDL_GL_GREEN_SIZE, 6);

// SDL_GL_SetAttribute(SDL_GL_BLUE_SIZE, 5);

SDL_GL_SetAttribute(SDL_GL_RED_SIZE, 8);

SDL_GL_SetAttribute(SDL_GL_GREEN_SIZE, 8);

SDL_GL_SetAttribute(SDL_GL_BLUE_SIZE, 8);

SDL_GL_SetAttribute(SDL_GL_ALPHA_SIZE, 0);

// SDL_GL_SetAttribute(SDL_GL_ALPHA_SIZE, 8);

//SDL_GL_SetAttribute(SDL_GL_ACCELERATED_VISUAL, 0);

// SDL_GL_SetAttribute(SDL_GL_ALPHA_SIZE, 5);

//SDL_GL_SetAttribute(SDL_GL_FRAMEBUFFER_SRGB_CAPABLE, 1);

SDL_GL_SetAttribute(SDL_GL_RETAINED_BACKING, 0);

// SDL_GL_SetAttribute(SDL_GL_DOUBLEBUFFER, 0);

I wrote a nasty macro to see the outputs of any of these (anyone better with these macros? the double param annoys me):

#define logGottenGlAtrib(name, literalAttrib) SDL_GL_GetAttribute(literalAttrib, &resultInt); \

SDL_Log("contexts %s %i", name, resultInt);

int resultInt = 0;

logGottenGlAtrib("SDL_GL_DEPTH_SIZE", SDL_GL_DEPTH_SIZE);

I am getting a result like this with the current settings:

contexts SDL_GL_DEPTH_SIZE 0

contexts SDL_GL_STENCIL_SIZE 0

contexts SDL_GL_DOUBLEBUFFER 1

contexts SDL_GL_RETAINED_BACKING 0

contexts SDL_GL_BUFFER_SIZE 32

contexts SDL_GL_RED_SIZE 8

contexts SDL_GL_GREEN_SIZE 8

contexts SDL_GL_BLUE_SIZE 8

contexts SDL_GL_ALPHA_SIZE 8

contexts SDL_GL_CONTEXT_MAJOR_VERSION 2

contexts SDL_GL_CONTEXT_MINOR_VERSION 0

Open GL says we are OpenGL ES 2.0

I’m using a single call to SDL_GL_SwapWindow(window); when I’m done rendering. Also worth noting since this seemed like a vsync issue (some sort of over eager automatic buffer swapping) I have tried to disable it and also tried explicitly enabling it. I have figured out the following:

- only the final glDrawArrays will ever show up… when I comment out the later calls my earlier draw calls work perfectly… but when I try to render the UI (with or without enabling blending) it seems like the frame is either cleared or corrupted somehow.

I tried adding glFlush() and things like that between calls. I will probably experiment with sprinkling glFlush() on every other line of code next… I’m running out of ideas to try.

Also things like

glUniform4f(uniformLocations->ui_position,

renderObj->renderRect.x+0.1,

-renderObj->renderRect.y+0.1,

0.0,

0.0);

Seem to be occurring out of order with the draw call… since I am rendering in a loop… while its clear some of these such as position work, others like color don’t work at all (if I hardcode a value it goes into the shader nicely, but otherwise I get a seemingly random value from a random object instead of the uniform value I just set). I can experiment with setting the uniforms a different way but since hardcoded values work I can’t conclude the above function is broken, only that its not working how I would expect.

I will try to get some more screenshots soon… I have some Expected/Actual screenshots that are really random and probably not helpful. Whatever I do only one glDrawArrays seems to show up on the frameBuffer and its usually the final one.

Some other odd things, if the rendering is working to render one (final UI object) and then I proceed to clear the depth and or stencil buffer between objects (even though depth test is disabled and depth writes are disabled and same for stencil buffer) it seems that clearing those buffers leads to nothing being observed, blank screen.

I don’t want to point fingers or make any grand conclusion about it, but it seems intentionally broken so I’m wondering is there any option to target these devices now? Do I have to use ANGLE? Many thanks in advance to any input on this topic! I’m not trying to help sell newer phones, I’m trying to sell an app that actually works on whatever devices the user has, and now it seems impossible and has (in conjunction with publishing an AAB that didn’t work at all) made my app launch pretty unsuccessful on the Android platform.

I have some ideas about it… since I can get one draw call to work maybe I can do all my rendering to some other entity (texture or non default frame buffer) then render that to the default frame buffer… but its a bit more work than I’ve committed to completing just yet and I am pretty sure I could run into a very similar issue with that approach. Thanks!