Hello everyone,

I am trying to figure out a problem that I am having with porting my app to UWP.

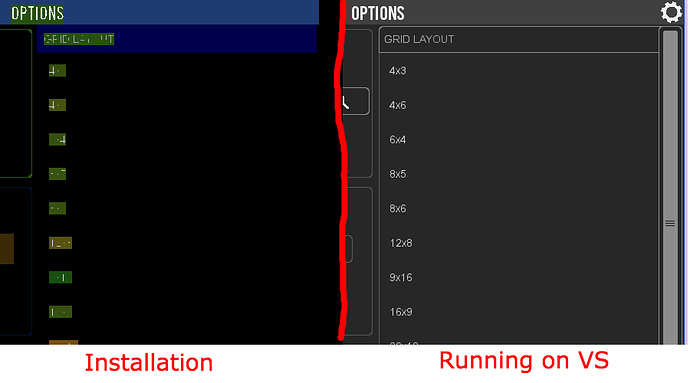

The application is working fine expect with the rendering part. While on my development system it looks fine, once I install it on an other system it looks rubbish. I found out that if I set the flag to sdl_renderer_software when I create the renderer then I can reproduce the rubbish rendering on my development system as well. I have tried every combination and it doesn’t work.

Using the default renderer driver - direct3d11 with SDL_RENDERER_SOFTWARE SDL_RENDERER_ACCELERATED SDL_RENDERER_PRESENTVSYNC SDL_RENDERER_TARGETTEXTURE

Using the render driver opengles2 with SDL_RENDERER_SOFTWARE SDL_RENDERER_ACCELERATED SDL_RENDERER_PRESENTVSYNC SDL_RENDERER_TARGETTEXTURE. That doest even let me create a renderer it fails with invalid window. I’ve set the window flag when I create it to SDL_WINDOW_OPENGL but that didn’t change anything.

Last, using renderer driver software wit SDL_RENDERER_SOFTWARE SDL_RENDERER_ACCELERATED SDL_RENDERER_PRESENTVSYNC SDL_RENDERER_TARGETTEXTURE. Again nothing changed rubbish rendering.

What can I do? what am I missing?

*Here is a screenshot for a reference to what I mean with rubbish.

Thank you